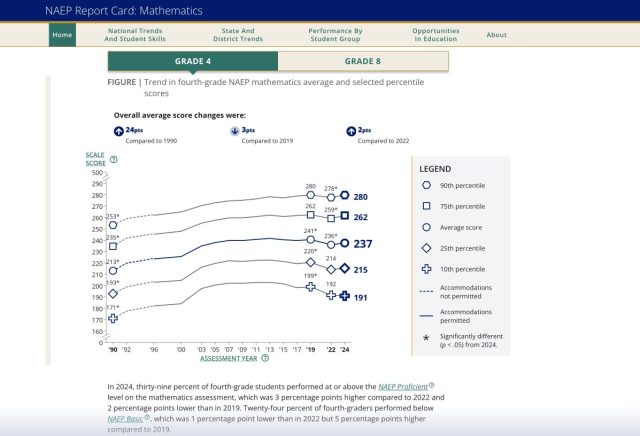

One of the most popular and well-scrutinized reports of NAEP reading and mathematics test results in recent years is the line graph showing performance over time for students at selected percentiles. Specifically, the report shows the mean scale score across NAEP administrations for students at the 90th, 75th, 50th, 25th, and 10th percentiles.

By now, we are all familiar with the report and the patterns it reveals. For years, the five groups of students appeared to move in lockstep from one NAEP administration to the next. Parallel lines showing increases, no change, and rarely decreases in performance across the board.

Then around a decade ago things started to change. With the 2017 NAEP administration we began to see the results for higher and lower performing students moving in opposite directions. Notwithstanding the pandemic blip in 2022, that trend continued in 2024 – and we know how NAEP loves its trends.

The gap between the high and low performers on NAEP is growing, particularly the gap between the highest performers (90th percentile) and lowest performers (10th percentile).

What does this mean?

The Sky Is Fine, But The Ground is Sinking

Predictably, the realization that NAEP results have been falling steadily for a decade elicited a Chicken Little response; that is, The Sky Is Falling!

The percentile charts, however, appear to tell a different story. The sky is just fine, or at least it’s where it always was. The problem is that a sinkhole has opened up under our feet.

The rich get richer and the poor get poorer in reading and mathematics.

If you read between the percentile lines and do the math just right, you may conclude that most, if not all, of the decline in the overall mean NAEP mathematics and reading scores is due to the performance at the 10th percentile.

Which leads one to wonder what we know about the students at the 10th percentile and also leads to today’s focus on the number 20.

A Thought Experiment

Although the results presented above are national averages, but the phenomenon is similar in states across the nation. Therefore, for the purpose of our little thought experiment regarding those 10th percentile kids, let’s focus on the state level.

First things first. What is a percentile? We’ve all grown up in our norm-referenced worlds with the definition that the percentile represents the percentage of students scoring at or below a particular point. If you scored at the achievement test or SAT scores was at the 95th percentile, you performed equal to or better than 95% of the population to which you were being compared.

Sometimes lost in that definition, however, is the understanding that each percentile is a slice of 1/100th the score distribution. Each percentile contains 1% of the students tested.

In other words, your 95th percentile score was equal to the score of the 1% of kids also at the 95th percentile and better than the scores of the 94% of kids in the 1st through 94th percentiles.

On NAEP mathematics and reading tests, the average sample size across states is approximately 1,900. At the state level, therefore, each percentile contains roughly 19 kids. For our purposes today, let’s round that to 20 – a nice round number and one that falls between the optimal and typical class size at the elementary and middle school levels. The other nice thing about 20 is that it’s a number we easily wrap our heads around.

Keeping in the back of our minds that there are another 180 kids at percentiles 1 through 9 presumably performing worse and 1800 kids at percentiles 11 through 99 performing increasingly better, let think about those 20 at the 10th percentile.

The 20 at the 10th

Close your eyes and picture those 20 students. What do you see?

What do you know, not know, and what do you want to know?

What questions will you ask about these students? I’ll say that there are no wrong questions (or answers), but you know that’s not really true.

If you’re coming at this from a competency or skills-based bent, you might ask what these students know and are able to do.

Perhaps you may be focused on the educational opportunities, or lack thereof, these kids have been afforded to this point in the fourth or eighth grade? What educational resources and support systems do they have in place or available at school and/or at home?

Perhaps your initial thoughts are about who these kids are? How many of the 20 are students with disabilities or English language learners? If they are English learners, how long have they been enrolled in school in the US and/or receiving services? How long have they been enrolled in the same district? Have they been in the same school/class all year?

How many of the 20 have been chronically absent this year or in previous academic years?

Do/can you picture them all in the same school or the same classroom?

If we zoom out to the whole distribution, do you picture these students in the same district, school, or classroom as students performing at the 90th, 75th, 50th, or 25th percentiles?

Now, looking back across the last decade, how, if at all, has this group of students changed?

We talk about performance improving or declining as if the students were a constant, but we know that’s probably not true.

On any of the factors that you deemed important above, how different or similar is the group of 20 students in 2024 from the corresponding 10th percentile group in 2015?

(Note that I avoided questions about grades, test scores, reading or numeracy levels, and race/ethnicity – at least directly. As I mentioned, there are wrong questions or at least some questions that will lead us in the wrong directions.)

Putting It Together

The good news is that we don’t have to rely on a thought experiment. States have systems in place and more than enough data to answer just about any relevant question one might think of regarding these 20 students, their teachers and schools, their predecessors and successors.

That data might not be as easily or directly available for NAEP with its sampling, models, and plausible deniability (oops, I mean values), but even with NAEP there’s enough there there to get the ball rolling.

The bad news, of course, is that all of the questions posed above are relevant and there likely will be multiple answers to each of them. There surely will not be a single, simple profile describing all 20 students. Still, knowing and understanding what you’re dealing with has always been better than running around shouting that the sky is falling.

Scaling Up

Our 20 NAEP students, of course, are just a starting point. As many have recommended, states will want to repeat this exercise with their own assessment programs – a 10th percentile pool of 500-600 perhaps in a medium-sized state. They will also want to look across grade levels and time.

And we’ll also want to jump back to the full NAEP and dig deeper on that national 10th percentile to inform decisions about what we might need in terms of federal policies and national policies (no, not all federal policies have to be national, one-size-fits-all policies).

Yes, the 20 students are just a starting point, but the important thing is that we have to start.