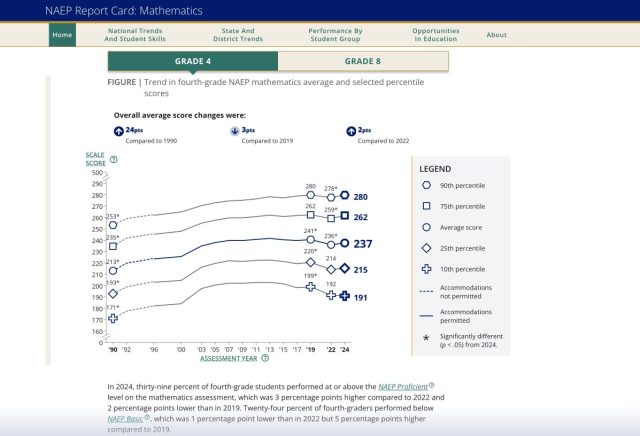

The relationship between assessment and learning is one that we’ve been struggling to understand for as long as I can remember. Before we jump headlong into reimagining assessment, it will be beneficial to clarify what we mean by both terms as well as the relationship(s) between them.

Let’s begin with a simple filling in the blank item: Assessment ______ Learning.