I argued in last week’s post that those of us involved in educational measurement and testing needed to do a better job at deriving meaning from our scales. Now, usually after I get the ideas in one post off of my chest, I’m ready to go back into my journal of snippets, observations, and ponderings and begin to craft a post on a new topic.

This week, however, gnawing at me was the feeling that the issue was more than our need to do a better job at developing meaning scales. The issue was that something fundamental was missing from our definition and understanding of educational measurement. Something was missing, but what was it?

Start At The Very Beginning

To begin my search for answer, I examined the definition of educational measurement cited in the Brookhart and Bonner article that was the jumping off point for my post:

Measurement is a process that (a) provides information about a property of an object or event in the world, (b) uses instrumentation designed to be sensitive to differences in this property, (c) produces information in the form of values related to a scale or unit for that same property, and (d) is qualified by information about uncertainty. (Briggs et al, 2025, p. 1535)

That Briggs et al definition can be found in their chapter, On The Nature of Measurement, in the recently published 5thedition of Educational Measurement. Interestingly, as suggested by the page number, the definition of measurement appears in the final chapter of that epic tome. I’m not sure whether that positioning suggests that the definition and discussion of the nature of measurement is the logical culmination of all of the prerequisite information that comes before it or more of a subconscious reflection of our tendency in the field to ask questions of students (i.e., test) before we ask ourselves the difficult questions about what we are measuring and why.

As I considered that definition, my first reaction was that it was a far cry and a major step forward from the SS Stevens “definition” that many of us first learned, namely, “measurement involves the assignment of numbers to objects or events according to a rule(s).” To be fair to Stevens, the “according to a rule” portion of the quote is often inconveniently omitted when citing him.

Even more importantly, as I noted in a 2021 post, we further shortchange Stevens by failing to note that the oft-cited definition was merely a lead-in to the question, What are the rules?, and his observation that, “The problem as to what is and is not measurement then reduces to the simple question: What are the rules, if any, under which numerals are assigned?”

The definition by Briggs et al does, in fact, establish a set of such rules for the process of measurement. And also, to be fair to Briggs et al, if you read the complete chapter (which I strongly encourage), you will find their TISM framework and this in their expanded description of its “S” component, Scales and Units:

The development of scales and potentially units to ensure that the (usually numeric) values that result from measurement will have an interpretation that is meaningful, publicly explainable, and invariant (within specified limits) to the objects being measured, the instruments used to produce the numeric values, and the person who is interpreting the values. (p. 1536)

The inclusion of the condition that the development of scales must ensure an interpretation that is meaningful, publicly explainable (and invariant) is a critical step toward filling in the missing piece in the definition of educational measurement. However, it still completes the process of measurement with the scale (and modeling of error on that scale).

That the meaningful, publicly explainable interpretation should the reconnect measurement with the real world where it began is implied but not explicit. That gap between implicit and explicit is, in my opinion, still too wide, leaving open to interpretation who is responsible for making that interpretation and what qualifies as an acceptable interpretation. For example, do interpretations of effect sizes in terms of weeks, months, or years of learning meet the requirement for a meaningful, publicly explainable interpretation? If you follow this blog, you know where I stand on that question.

So, how do we make explicit the need to connect educational measurement to the real world?

Reconnecting Educational Measurement With The Real World

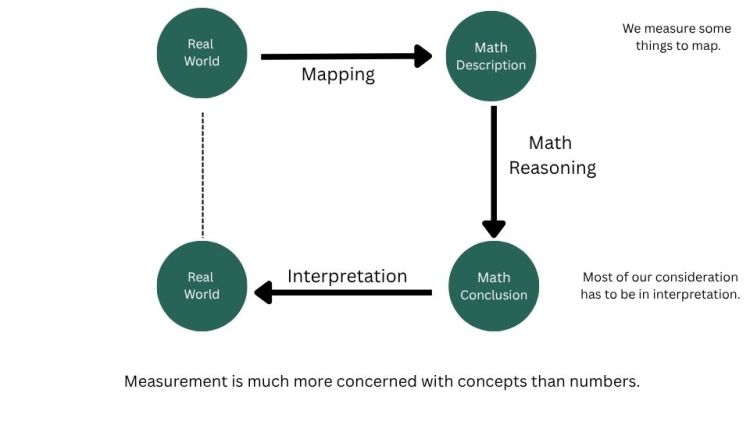

While sorting through some old papers (very old), I came across the notes from my first measurement course as a doctoral student at the University of Minnesota in January 1984. There at the top of my notes from the first class of the semester was a depiction of educational measurement that explicitly closed the loop.

Consistent with the current definition, the notes also made it clear that measurement is never perfect and that we must do everything necessary to identify sources of error a) to minimize alternative interpretations of the results of measurement and b) to counter alternative explanations.

In short behavioral terms, educational measurement involves stimuli and responses, and it is our responsibility to interpret those responses. It is our responsibility to reconnect our mathematical results to the real world through interpretations and to validate those interpretations back in the real world.

The end.

Yes, please quit while you’re ahead, Charlie.

Well, not so fast.

Validate?

Yes, of course, those interpretations that are “invariant to objects being measured, the instruments used to produce the values, and the person who is interpreting the values” must be validated.

Feel free to stop reading here. However, if you’re interested in a brief trip down the rabbit hole of validity, please read on.

Interpretations, Inferences, and The Elephant In The Room

It may be tempting when reading just the definition of measurement to conclude that those scale interpretations and their validation is not a measurement concern. That is a very real risk when stopping short of closing the loop that connects measurement back to the real world.

The Briggs et al chapter, however, includes a lengthy discussion of the complex issues surrounding terms and concepts such as measurement, testing, validity, and validation. It is easy to see how current thinking on validity and validation with a focus on uses and consequences, particularly as framed in the Standards for Educational and Psychological Testing, [emphasis added] connect validity and testing. The connection between validity and measurement (and specifically this definition of measurement) is a little less straightforward for the practitioner or casual assessment specialist.

Nevertheless, as I discussed at length in the validity chapter in Fundamental and Flaws, it is critical to confirm (validate?) that our measures (and measurement instruments) connect to the real world as we need them to do or that we have the right tool for the job at hand; that is, that our instrument of choice supports interpretations of differences in the construct with enough precision at critical points of interest along the scale.

That we have the right thermometer to measure the temperature in our freezer, our body temperature, or the sap we are boiling to make maple syrup.

That we have the right test to measure whether a student is proficient or to determine where they fall on the proficiency continuum.

Further, we must confirm those interpretations before we can begin to think about the validation of the inferences made from uses of the instrument and the consequences of those uses.

The end.

Image by Gerd Altmann from Pixabay

You must be logged in to post a comment.