You may view state testing as a monolith, an impersonal political, corporate structure indivisible and uniform, a large single upright block of stone with columns of ovals etched on it and a Pearson logo laser engraved in the bottom left corner.

But you would be wrong. The block of stone could just as easily bear the logo of the company formerly known as AIR Assessment. No wait, sorry. Different post. Reset

But you would be wrong. Far from uniform, state tests and state assessment programs have tried just about every design under the sun over the past three decades. Although it might be exaggerating to say that there is nothing new under the sun, there is certainly quite a bit that has already been done with state testing which we can shine a light on to help guide us as we sit on the cusp of a new era in state assessment and accountability.

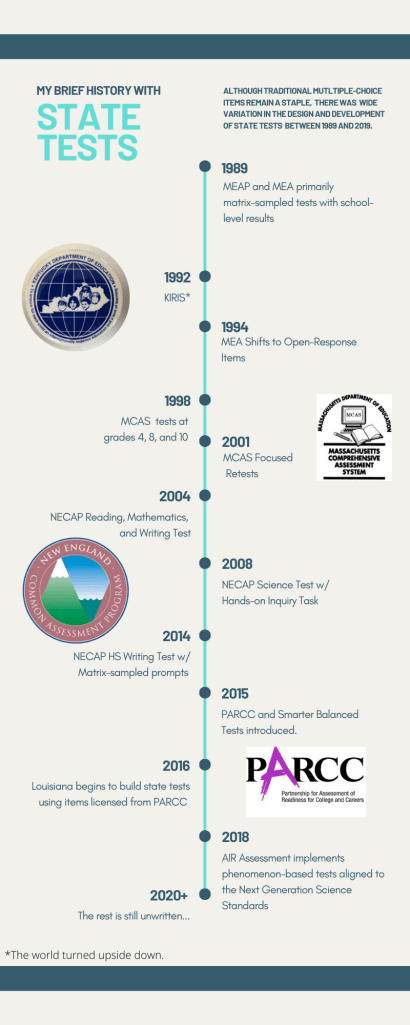

The brief history of my time in state testing began with the Massachusetts Educational Assessment Program (MEAP) and the Maine Educational Assessment (MEA) in the late 1980s and ended in 2019 with the initial administrations of the new phenomenon-based science tests aligned to the Next Generation Science Standards (NGSS) and developed by AIR Assessment (now Cambium Assessment).

During that time I saw a wide variety of approaches applied to the design of individual tests and to state assessment programs. Long before computer-based testing and interim assessments, Bock and Mislevy put forth a multi-stage adaptive design for state tests which relied on the U.S. Postal Service instead of bandwidth and a stable internet connection. The New Hampshire state assessment included a listening test in which students had to watch a video, provided on a VHS tape and played on a television at the front of the classroom, and then answer a series of questions. Some of the approaches, like the two examples above, were ahead of their time with regard to technology. Others, like portfolios and performance events, were ahead of their time in terms of technical quality and a clear vision of how they should be incorporated into state testing.

Given the current trends in state testing and general acknowledgement that it’s time to reconsider the current assessment/accountability paradigm, I think that many of the former approaches to state assessment are worthy of reconsideration. More than simply learning from the past and avoiding past mistakes, as the purpose of state testing becomes more well-defined over the next few years, some of the previously tried approaches, updated of course, may offer the best alternative for moving forward. A lot of thinking and effort went into inventing those wheels. Now may be the time that one or two of those wheels move from invention to innovation.

In the remainder of this post, I list key or notable features and developments associated with some of the varied state assessment programs with which I have been directly involved in some capacity (e.g., assessment contractor, DOE psychometrician, consultant, TAC member).

- Massachusetts Educational Assessment Program (MEAP)

- administered every other year from 1986-1996

- Grades 4, 8, and 12

- Totally matrix-sampled with group-level IRT used to produce aggregate scores

- Student scores generated only to disaggregate results

- Students completed one session (approx. 20 items) in each of four content areas: Reading, Mathematics, Science, Social Studies

- School scores based on 300-400 items per content area

- Maine Educational Assessment (MEA) 1980s

- Grades 4, 8, and 11

- Each grade-level test administered at a different time of year (fall, winter, spring)

- Matrix-sampled in science, social studies, arts and humanities to produce aggregate scores only

- Common/Matrix-sampled in reading and mathematics to produce student scores

- Two writing prompts – transitioned from analytic to annotated holistic scoring

- State level writing results “frozen” from year-to-year

- Special studies every 4-5 years to determine new state mean for writing.

- Maine Educational Assessment (MEA) 1990s

- Transition from selected-response to constructed-response items

- Initially, 50% constructed-response items, matrix-sampling across students

- Eventually, 100% constructed-response items

- Led to the development of the Body of Work standard setting method

- Kentucky Instructional Results Information System (KIRIS)

- Assessment and Accountability Systems connected by design

- Grades 4, 8, and 11/12 with state-provided “scrimmage tests” in off-years

- Explored distributing subject-area tests across grades

- Combination of performance-based assessment tasks: constructed-response items, performance events, portfolios

- Student scores based on 3-5 open-response items per content area

- Initially, results reported only in terms of performance levels, no scaled scores

- School scores based on a composite of common and matrix-sampled items

- Tests or performance events in reading, writing, mathematics, science, social studies, arts, humanities, practical living/vocational skills

- Combined subject areas to create interdisciplinary performance events

- Developed system for central auditing of locally-scored portfolios

- Massachusetts Comprehensive Assessment System (MCAS)

- Originally Reading and Writing, Mathematics, Science, and Social Studies

- Grades 4, 8, and 10

- Tried various approaches to combined scores from Reading and Writing tests to produce an English Language Arts score

- Significant percentage of points from constructed-response or short-answer items in each content area

- Student test forms contain approx. 80% common items (used for scoring) and 20% matrix-sampled items

- Embedded equating and field test items within the matrix-sampled portion of the test form

- MCAS High School Tests for Graduation

- Attempted to develop retest forms with maximum information at the cutscore for high school graduation

- Writing test contained a common literary analysis prompt but allowed (required) students to choose the book on which to base their response (something they had read on their own, read in school, or as it turned out, a movie they had seen)

- Developed performance appeal based on using student coursework as a portfolio and test performance of students taking similar pattern of courses as an anchor

- New England Common Assessment Program

- Separate Writing test at grades 5, 8, and 11 containing selected-response items and direct writing prompt as well as “planning items”

- Grade 11 Writing transitioned to two writing prompts per student (common and matrix-sampled) with equating through scoring rubric and exemplars and school-level results reported on all prompts.

- Science test including a hands-on Science Inquiry Task with groupwork followed by individual assessment

- Louisiana Educational Assessment Program (LEAP 2025)

- Test forms built primarily from licensed PARCC items

- Resolved equating challenges associated with using a new set of licensed items each year

- Resolved challenges associated with linking to the PARCC scale while making state-specific adjustments to the test blueprint

- AIR NGSS Science Assessment

- Return to matrix-sampling, but using student-level scores as the primary unit of analysis

- Resolving challenges associated with field testing across and within multiple groupings of states under different testing conditions

- Phenomenon-based design based on student interactions with stimuli rather than clearly identified scorable test items

- Applied a bifactor model

- Led to new approach to standard setting

As I said, nothing new under the sun may be an exaggeration. There are new constructs to assess, some like 21st century skills which have been around for a long time now and others which have not been thought of yet. There will be personalized learning systems and embedded assessments which I cannot imagine. With regard to approaches to designing end-of-year state testing programs to gather information on student proficiency, however, I have certainly seen a lot.

Come to think of it, there is one approach to collecting information about student proficiency that we have not tried that I think might have some merit: Let’s ask teachers to tell us which of their students are proficient.

Image by Clker-Free-Vector-Images from Pixabay

You must be logged in to post a comment.