Nothing lasts forever.

All good things must come to an end.

To everything there is a season.

Ashes to ashes. Dust to dust.

Whenever it’s time to revise or repair something, you have to ask yourself whether a revision is the best option or is it time for something more radical.

Do I fix the transmission or is it time to move on from my 2014 Toyota Corolla?

The same decision-making process applies to bigger decisions like whether to enter into another cycle of upkeep on your current house or downsize to something smaller (perhaps it’s time to move to a senior community in a warmer climate) and to smaller decisions like whether to renew that journal subscription (no) or association memberships (no, no, yes, maybe).

It’s only logical, therefore, that as the process of revising the Standards for Educational and Psychological Testing appears to be beginning again, to ask ourselves the same set of questions we ask when making those other decisions.

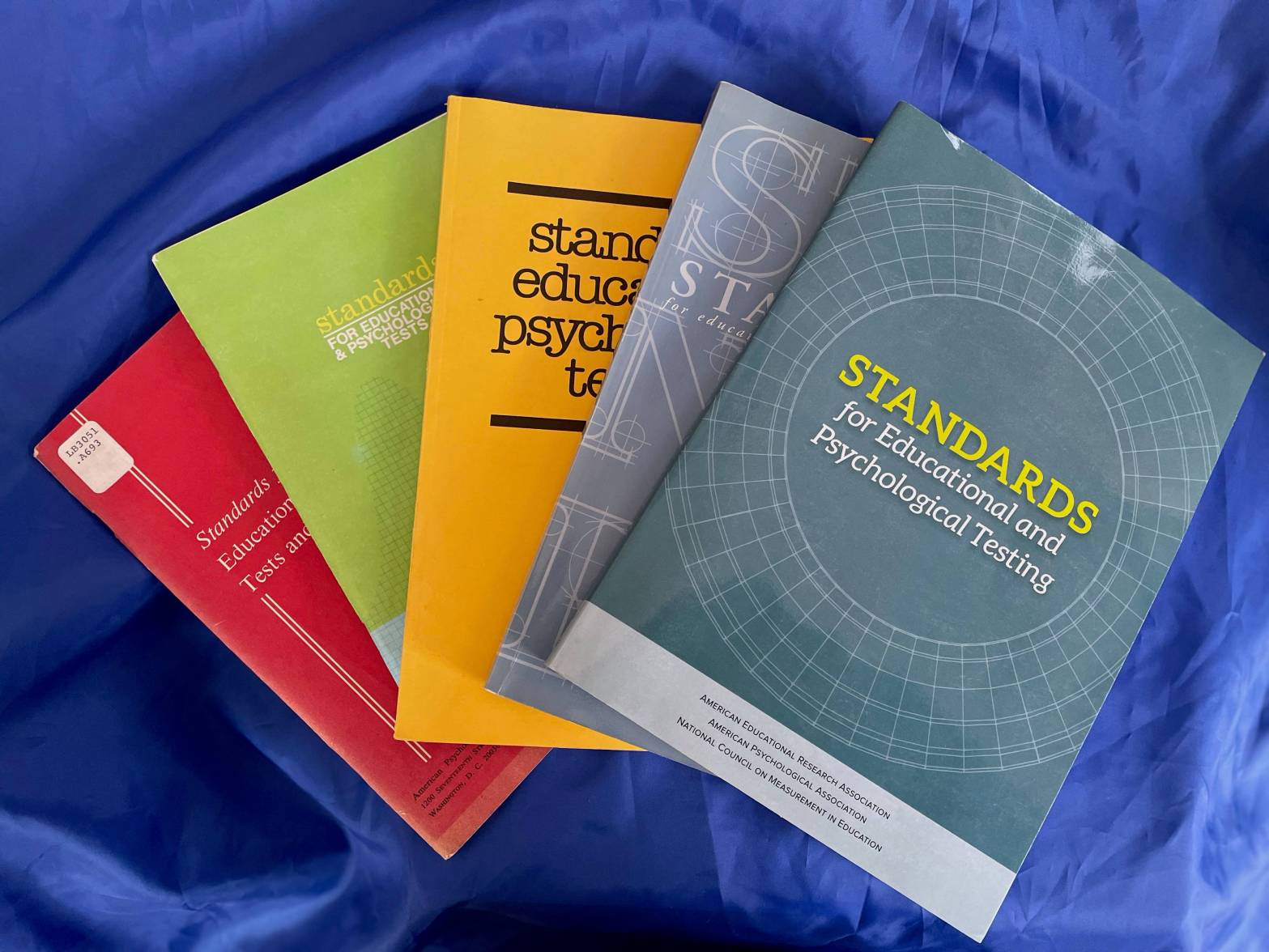

Does it make sense for AERA, APA, and NCME to publish another revision of the Standards or is it time to do something more radical? Since first being published in 1966, the joint Standards have served us well through four revisions in 1974, 1985, 1999, and 2014, but perhaps it is time for something else to take their place.

Note that this is different than simply asking whether the 2014 edition of the Standards needs to be revised or whether my Corolla needs a new transmission. Those questions are relatively easy to answer. Having decided that a change is needed, deciding what direction to take can be much more difficult. Inertia makes it easy to convince ourselves to keep the car, stay in the current house, or revise the Standards. In the long run, however, making a change might be the better decision.

In this post, I present five issues for the field to consider before embarking on the next revision of the joint Standards.

Why AERA, APA, and NCME?

The childhood taunt, “Who died and made you boss?” comes to mind. OK, I’ll admit that taunt has crossed my mind (and lips) more than a few times since childhood, so perhaps childish is a better description than childhood. Nevertheless, the question should be asked whether AERA, APA, and NCME are the best choice of organizations to oversee these testing standards.

Accepting that AERA, APA, and NCME made perfect sense in 1966, are they still the best choice? A lot has changed since I was 7 years old. None of them were ever testing organizations per se, but that is not necessarily a bad thing. You definitely wouldn’t want to create a fox guarding the henhouse situation. On the other hand, it might make sense for testing to be a primary focus of the organizations responsible for the Standards.

At least one of these three organizations has self-identified as anti-testing – not a dealbreaker, but something to be considered. Another of the triumvirate was pretty tightly associated with testing back in 1966, but its focus in recent decades may have drifted more toward basic measurement than applied testing. And as I learned Derek Briggs, “testing and measurement are two distinct activities.”

Plus, educational and psychological testing today seem to encompass a much broader array of social, technical, legal, and perhaps even ethical and moral issues than they did in 1966 – or than we realized or acknowledged in 1966. Do we need to expand the list of players involved in the development of the Standards to best account for all of those issues? Consider the list of sponsoring organizations of the Joint Committee on Standards for Educational Evaluation (JCSEE).

We want to be sure to have the proper representation at the table when producing something as important as the Standards.

Don’t bite off more than you can chew

Is there still enough common ground, or overlap, between educational and psychological testing that they can be covered adequately by the same set of Standards? Yes, both still involve administering “tests” to people, but one could argue, increasingly, not the same kinds of test instruments for the same kinds of purposes and uses, or to support the same types of policies and decisions.

Even within educational or psychological testing, does a single set of standards make sense?

Imagine having one set of standards for “air travel” that attempted to be applicable to everything from kites to recreational drones to hot air balloons to small private planes to private jets to commercial airlines, to military aircraft, to spacecraft, to space stations, to spy balloons from China (topical reference that will be removed later).

Could you identify a core set of principles and safety standards that might be applicable across the board. You probably could. But they would have to be written in a way that was sufficiently vague, making them not particularly useful for any one specific application.

Always a Step Behind

By its very nature, a document like the joint Standards runs the risk of being out-of-date before its ink barely has a chance to dry.

When the 1985 edition of the Standards was published, I don’t think that many people foresaw that a 1987 article by a West Virginia physician would result in a seismic shift from norm-referenced to criterion-referenced testing. Not to mention the havoc that Messick wrought with Validity in 1989.

Alignment, adequate yearly progress, and annual measurable objectives were not the major concerns they would soon become when the 1999 edition of the Standards were published.

Note that debates about validity were well-known throughout the 1980s and Webb’s seminal monograph on alignment was published in 1997, but neither issue had reached the tipping point where it touched everything to do with testing by the time of the 1985 and 1999 revisions of the Standards, respectively.

The 2014 edition played catch-up with issues related to accessibility and the use of testing for school accountability, and also at least laid out many of the issues that would have to be considered with the long-anticipated shift to computer-based and adaptive testing.

However, I don’t know that anyone in our little corner of the educational testing world foresaw the licensing of items, breaking up of tests into partial forms, the resurgence of item banks created piecemeal, wholesale abandonment of testing windows to facilitate computer-based testing, or the testing challenges raised by the Next Generation Science Standards.

I have to wonder whether this model of bringing together three organizations every decade or so to spend several years creating a static document and then go their separate ways is the best model to follow in this rapidly changing world.

I point to AERA policy statements on topics like the use of large-scale tests for high-stakes decisions like graduation and the use of value-added measures for teacher evaluation, and the often tortuous and tortuous attempts by NCME at crafting consensus policy statements in recent years as evidence of the unevenness of the current process.

Might it not make more sense to have a standing committee in place that allows the Standards to function more as a living document? This committee would have greater responsibility than the Standards Management Committee created in 2005 to oversee revision of the document. The envisioned committee would be able to respond in a timely manner to unanticipated issues or changes in the field while at the same time being able to identify the innovations among the many inventions introduced and distinguish fads from fundamentals.

Further, viewing the Standards as a living document would be consistent with the statement included on the copyright page of the 1985 and 1999 revisions of the Standards:

The Standards for Educational and Psychological Testing will be under continuing review by the three sponsoring organizations. Comments and suggestions will be welcomed…

Tests → Testing → ???

With the 1985 revision, the focus and title of the joint Standards shifted from tests to testing. As written in the preface to the 1985 edition, number one in the list of guidelines that “governed the work of the revision committee” was that the Standards should “address issues of test use in a variety of applications.”

Four decades later, it is clear to me that it is time for another change to the title. In short, it’s time to move on from the limits imposed by the word test and the concept of testing.

If the goal is to keep the focus on the use of data to inform education policy and decision making, limiting the Standards to something called testing is wholly inadequate. The emerging work of Alina von Davier and others in computational psychometrics, machine learning, AI, etc. should make it clear that the future of informing educational decision-making will go well beyond anything that can be adequately covered under the small umbrella of the term testing. Yes, traditional tests may supply some of the data used in the emerging model, but they will play no more than a minor role in the overall process.

And no, this is not a case of attempting to anticipate the future via the Standards. The post-testing future is already here. Kathleen Scalise and Kristen Huff, among others, have been sounding the alarm (my interpretation) on the pervasive influence of data science and learning analytics for nearly a decade now (perhaps longer).

Even something as mundane as federally mandated school accountability systems, commonly referred to as test-based accountability systems, have already moved educational policy and decision-making beyond testing. It is true that those systems currently use results from state tests to derive indicators of academic achievement and student growth, but tests and testing is not the only way to arrive at the estimates of student proficiency that fuel those systems. As I argued in my October 2020 post, Would AYP Have Sucked Less Without Test Scores?, the failings of adequately yearly progress as a vehicle to determine school effectiveness and inform educational policy had little to do with state tests. The same applies to school accountability as a whole.

Is Standards the Use of Data to Inform Education Policy and Decision Making too unwieldy for a title? Perhaps the title change is as simple as changing the word testing to assessment, but we’ll save that debate and discussion for a later post.

These may not be the Standards You’re Looking For

Finally, we must remain open to the idea that the joint Standards are not the only game in town. That is, they are not the only standards we should be using to inform education policy and decision-making and they may not be the most relevant standards for a particular application or use of test results. The Classroom Assessment Standards for PreK-12 Teachers produced by JCSEE is one example.

At the risk of turning this post into an advertisement for the JCSEE, when I was in graduate school in the mid- to late-1980s, the one set of standards that we carried with us at all times was the Program Evaluation Standards. We referred to the 1985 revision of the joint Standards, of course, but the program evaluation standards were much more applicable to our use of tests and test results. I would argue that those standards have gotten short shrift in recent years and it’s unfortunate that they have not played a greater role in the post-NCLB era of test-based school accountability.

Moving forward, perhaps we will be able to take a more holistic view and inclusive approach of to how we use standards to guide the use of data to inform educational policy and decision-making.

One thought on “Revise or Reimagine the Joint Standards”

Comments are closed.