For more than two decades now it has sat here on the corner of my desk. A rusty, humbling reminder that some aspects of this thing that we call large-scale testing are simply beyond our ken.

There are those times when we know we’ve done everything right, followed all the rules, operationalized best practices, and assured quality, yet at the end of the day we find ourselves shaking our heads and asking:

Just what is it that makes an item difficult?

Setting the Scene

It was the fall of 1999, and I was officially part of the Massachusetts Comprehensive Assessment Program (MCAS) again, this time working with the Massachusetts Department of Education. I had been with Advanced Systems when we put together a bid in response to the initial MCAS RFP issued in 1994 but set out on my own before the contract was finally awarded the following summer.

Between 1995 and 1999 I was just an MCAS groupie, traveling to districts and schools across the state, boom box, mixtapes, and Susan B. Anthony dollars in hand, conducting multimedia workshops about the fledgling state assessment program. Always ready at a moment’s notice to jump in and help the Department or the contractor when they needed assistance.

Anyway. It was the fall of 1999, a dark and stormy night as I recall, the second annual administration of the MCAS was complete; and sitting in front of me, mocking me really, was the item that would from that point forward be known as “the wrench question”.

At that time, however, it was simply Item #2 on the Grade 10 MCAS Mathematics test.

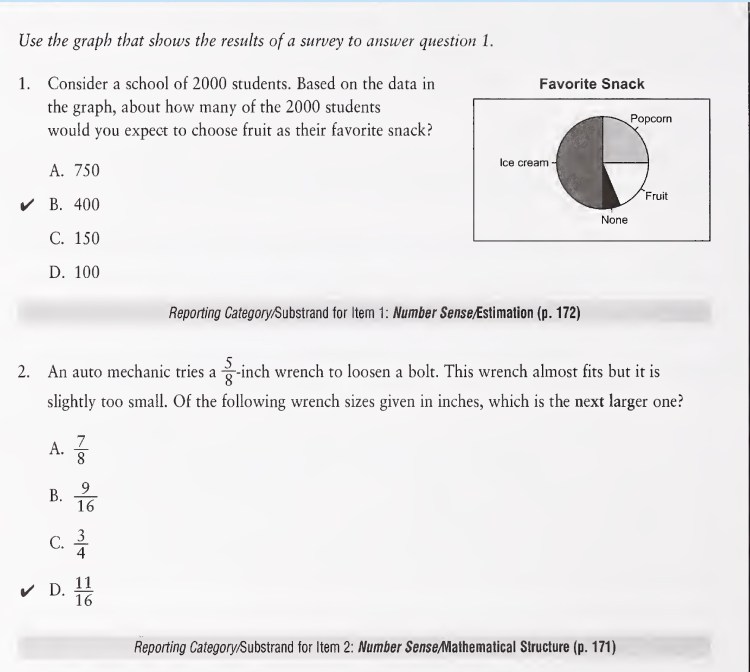

Item #2

This was still a couple of years before we received the directive from the Commissioner to put the “easier” items on first page of the math tests, but this wasn’t our first rodeo. That’s what we were trying to do.

Things started out fine. 77% of students answered Item 1 correctly.

This was at a time when 51% of tenth grade students still were performing at the lowest achievement level and the average raw score of 24.9 represented about 40% of the total possible points. Cranky, old professors were claiming we deliberately removed all of the easy items from the test so that kids would fail.

77% of students answering Item 1 correctly was sweet.

We expected no less from Item 2.

The result?

Officially, it was reported that 27% of Massachusetts tenth-grade students selected the correct response, “d. 11/16”. When all of the discrepancies were resolved and data were cleaned, it rounded to 28%.

28%

In essence, the item required students to place the following fractions in order from least to greatest:

| 5/8 | 7/8 | 9/16 | 3/4 | 11/16 |

What happened?

Sure, there were 3 different denominators, but come on, they were 4, 8, and 16. Based on the current standards, the mathematics in this item is at the fifth-grade level.

28%

You could have just asked the kids to order the fractions if that’s what you were measuring?

OK, there was some “real-life” context to add a dimension of problem-solving and perhaps increase engagement. Fun fact: In real “real life” those two factors often offset each other.

28%

Well, “auto mechanic” and “wrench” – obviously, the item was biased toward boys.

Yeah, I know what you’re thinking: Were we living in caves? But it was actually considered best practice to think in that gender-normative way back then. And boys did perform better than girls on the item (33% vs. 23%). Still, 33% is nothing to write home about, and if there had been any writing home it likely would have been done by girls.

28%

It was a trick question. You didn’t place the options in numerical order. You wanted kids to pick ‘Option A’ just because it was larger even though it wasn’t the next larger.

26% of students did select Option A (7/8), but even more, 35%, selected Option C (3/4). Silver lining, I guess, is that the smallest percentage of students, 10%, selected Option B (9/16), which was the lone option smaller than the original 5/8-inch wrench.

28%

It’s cultural – the kids are used to metric wrenches. There’s no such thing as an 11/16-inch wrench.

Wish I was making up these two. No, kids aren’t used to metric anything. Yes, there are 11/16-inch wrenches.

28%

The test didn’t count. The kids weren’t engaged.

Seriously. You think that the kids lost interest between the first and second item.

We had seen lack of engagement in the previous state assessment program where no individual student results were returned. A majority of tenth grade students didn’t bother to fill out their name on the test book cover. Non- and nonsensical response was rampant. Here, non-response was around 1%.

It is true, however, as we learned a couple of years later, when the tests counted for graduation, that there are different degrees of engagement, both before and during a test.

Even More Data

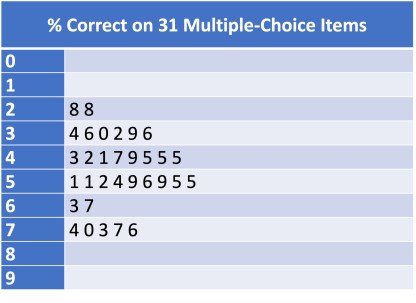

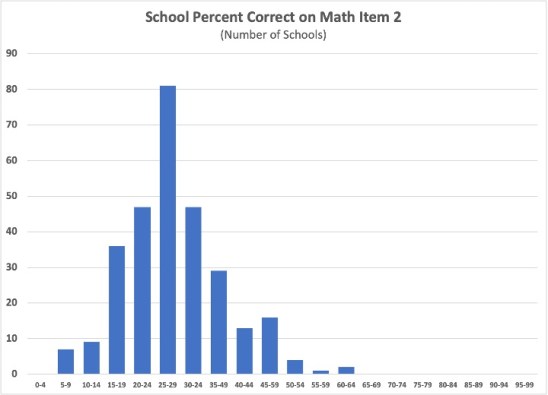

Item 2 tied with one other item as the most difficult multiple-choice item on the test.

Across the state, school performance on the item was distributed fairly normally, with a little tail stretching out to 60-64 percent correct. There was not one school where students performed as we had hoped and expected on the item.

What goes through the minds of students as they encounter our carefully crafted and finely-tuned test items?

Just What Makes an Item Difficult?

It’s been nearly a quarter-century now.

I have used ‘the wrench item’ as an ice breaker at workshops and family gatherings – yeah, I’m that guy. Performance has always been better than 28%, usually much better. (A teacher at one of my workshops actually took the test as a tenth-grade student in 1999. She didn’t remember the item.)

There have been other items that baffled over the years, of course. This item, on a graduation test, was answered correctly by 41% of all eleventh-grade students and by only 10% of those students who did not meet the graduation requirement.

And there was the item that asked students to calculate the total amount of sales tax on a $21,000 car with a sales tax of 7%. Performance was similar to the other items mentioned.

And there was the item that asked students to calculate the total amount of sales tax on a $21,000 car with a sales tax of 7%. Performance was similar to the other items mentioned.

This time, however, a mother had demanded to see her son’s test booklet, furious at us and not believing that he had failed the test. We set up an appointment and she came to the Department with her son in tow.

When she looked at that item and his response that the sales tax on the $21,000 car was $147,000, she looked at him, shook her head, and thanked us for our time. (She actually smacked him on the shoulder and offered a pithy comment about his response, but I didn’t think I should write that.)

For me, the ‘wrench item’ will always be my white whale – the one that got away from us.

It didn’t hurt the testing program. It didn’t even hurt that test.

It did make me more cautious about the way that I interpreted and used state test results, particularly results of individual items.

I look over at that wrench and think, that’s a very good thing.

You must be logged in to post a comment.